Google is making android users have unique experiences tailored towards solving their respective needs using the Google Lens Apps.

You know the feeling of frustration and disappointment you get when you can’t explicitly describe something you passionately desire to have either on a search platform like Google, Yahoo, Wikipedia or a person-to-person-discussion. For instance, a particular car model that suddenly drove pass you catches your eye, an outfit worn by someone and more.

That has got to end now as Google Lens App uses your smartphone camera to see what you see, and not just that, also understands what you see to help you take actions.

How it works

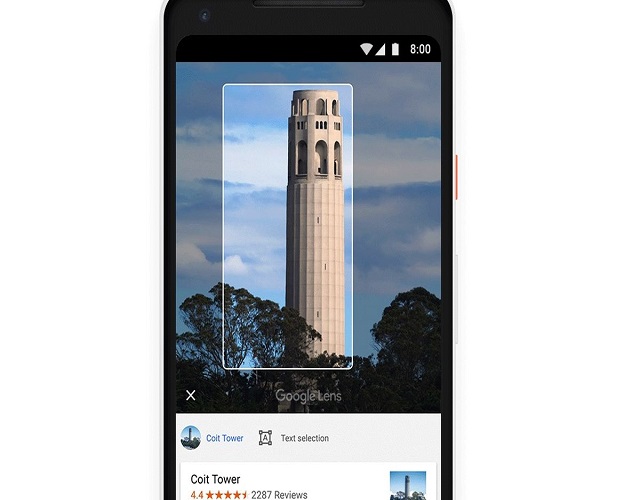

Smart text selection connects the words you see with the answers and actions you need and the Lens helps you make words by showing you relevant information and photos. For instance “say, you’re at the restaurant and see the name of a dish you don’t recognize- Lens will show you a picture to give you a better idea” says Google in a blog post.

With the style match feature, if an outfit, home décor item, or a pet catches your eye, you can open Lens and not only get info on the specific item but see things in a similar style that fit the look.

Finally, “Lens now works in real time”, says Google. With state-of-the-art machine learning, Lens is able to proactively surface information instantly and anchor it to the things you see, so that you’ll be able to browse the world around you just by pointing your camera.

To access this feature, ensure that you have the latest version of Google photos app on your device.